5 important things you need to know about Docker

The world we live in today is changing really fast, especially in the IT realm. Every day something new is in, other things go out of use and it’s up to us to adapt or be left behind. However, from time to time some of the tools that emerge make everything easier and most importantly help the IT crowd do more efficient work.

Six years ago, Solomon Hykes made the lives of many developers a lot easier by helping found a business called Docker. The main purpose of Docker is to make containers easy to use. Although the first release of Docker 1.0 was in 2014, over the years it became very popular and it was used in bigger proportions.

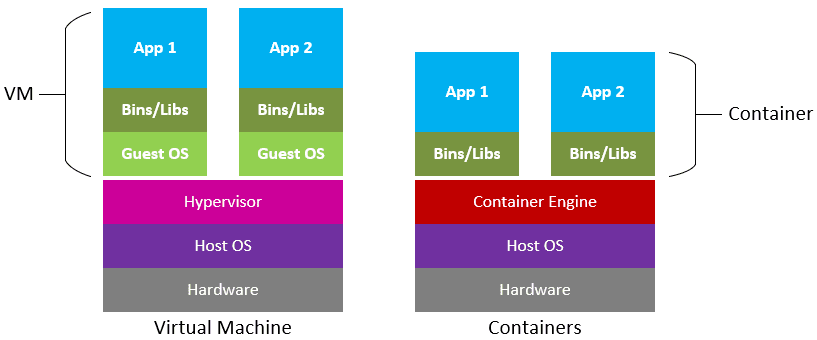

In order to understand the benefits of Docker containers, we will go a little bit back before the so-called containerization. Before containerization, the best way to isolate, organize applications and their dependencies was to place each and every app in its own virtual machines (VM). These virtual machines used the same physical hardware to run numerous apps and this process is called virtualization.

The main difference between containerization and virtualization is that containers effectively virtualize the host operating system (OS) and isolate the application dependencies from other containers running on the same machine. As mentioned before, with the virtualization, multiple applications were running on the same physical hardware. This process had few setbacks as virtual machines were bulky in size and the fact that more apps were running at the same time led to an unstable performance. Unlike VMs, containers do not waste or block host resources.

With the short explanation above, now we can continue by saying that Docker is one of the containerization platforms which can be used to create and run containers. In addition here are the five most important things you need to know about it.

1. Docker Hosting

Docker found its place in the IT world, or to be more precise – it was responsible for

changing container technology by providing a toolset to easily create container images of applications.

The biggest perk about docker hosting is that it provides a total environment to execute and run applications. It consists of the Docker daemon, images, containers, networks, and storage. Speaking of docker daemon, it’s a service that runs on your host operating system. However, all of the container related actions and commands that are received through the CLI of the REST API are what the daemon is responsible for. Also, the good thing is it can communicate with other daemons too, to manage its services. The client makes a request which is accomplished by the Docker daemon which pulls and builds container image and then it builds a working model for the container by using a set of instructions known as a build file. Long story short, after the Dockerfile is built, it becomes a Docker Image and when we run the Docker Image then it ultimately becomes a Docker Container.

2. Using Docker will save you time & money

Apart from the fact that setup processes can take a lot of time, they can be pretty expensive too. Using Docker will save you tons of time thanks to the deployment automation that will cut the time you use in half if not more. We all know that time is money. Using Docker will allow you to minimize your expenses regarding dedicated staff as well as reducing the cost of the infrastructure expenditure. Thanks to the containers’ unused memory, the disk could be shared between instances. Also, you won’t need to worry about the cost of services that need to be brought back up again since there will be different services packed on the same hardware.

3. Isolation

As we mentioned in the beginning, every application runs isolated from another which is the main difference between containers and Virtual Machines. However, Docker makes sure that each container has its resources that are isolated from other containers. The best thing is that you can have various containers for separate apps running totally different stacks. Because of this benefit and isolating each app, in case you don’t need any application, you can just delete its container and it won’t leave any files on your host operating system.

4. Security

Maybe one of the most important benefits of using Docker is the absolute guarantee of security. Because of the previous perk – isolation, we have a secure environment. This means that applications that are running on containers are completely isolated from each other which grants us complete control over traffic flow and management. This segregation doesn’t allow any docker container to look into the processes running inside another container.

5. Standardization

And last but definitely not least, is the standardization of Docker containers to ensure consistency across multiple developments. It’s safe to say that this is maybe the biggest advantage of the Docker-based architecture. What makes the use of Docker containers great is that engineers are more equipped to efficiently analyze and fix bugs in the apps. All of this is easier because standardizing service infrastructure throughout the entire pipeline allows the team members to work in a production parity environment. Then again, we see the connection between all of the five things pointed out as pros in using Docker, because standardization reduces a lot of time wasted on defects and increases the amount of time that can be used for feature development.

We can definitely say that Docker Containers share their OS so they run isolated processes regardless of the host OS. This means that its containers can run in any environment – on any computer, any infrastructure and in any cloud. After all, the reasons of why Docker is the future of the IT world are pretty obvious and now is the right time to hop on the Docker bandwagon!